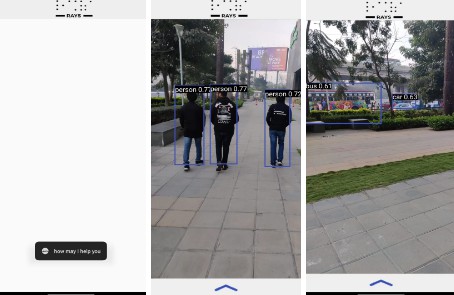

RAYS

RAYS – An Advanced Automated Navigation for Visually Impaired People

Welcome to RAYS – An Advanced Automated Navigation for Visually Impaired People. RAYS is a portable, lightweight, advanced speech navigation android app built with TensorFlow Lite. It utilizes intelligent speech recognition to perform a variety of tasks and navigate users.

This blind navigation system is designed to assist visually impaired individuals in navigating their surroundings. The system utilizes various sensors, such as cameras, microphones, and ultrasonic sensors, to gather information about the environment and provide verbal feedback to the user through audio-based navigation systems. These systems use speech synthesis to provide verbal instructions to the user and many more.

Three essential commands

#Start Detection or Start Detecting - Triggers Object Detection

#Stop Detection or Stop Detecting - Haults Object Detection

#Navigate to [Desired Location] - Navigates to user required space automatically

We have enabled RAYS with many useful features,

The Android app is built with simple and minimal UI and a in-depth emphasis has been put to speech recognition and synthesis

- The app opens and the RAYS assistant gets initialised,

RAYS has many features, to name few

On the command of ‘Start Detection’ – RAYS enables it’s Object Detection, Trained over quantized and latest state of the art architecture EfficientNet-Lite4, achieves 80.4% ImageNet top-1 accuracy, while still running in real-time (e.g. 30ms/image). Trained over the COCO 2017 covering over 90 general objects encounterd in daily life with an ability to be custom trained .

The Detector can be put in halt with a ‘Stop Detection ‘ Command

On the command of ‘Navigate to [Place name]’ The navigation turns on automatically through the Google Maps with precise voice navigations

RAYS is just a start to few of the many features told, RAYS has the capability to include and improve over a large set of features according to users choice and we’ll never start innovating.