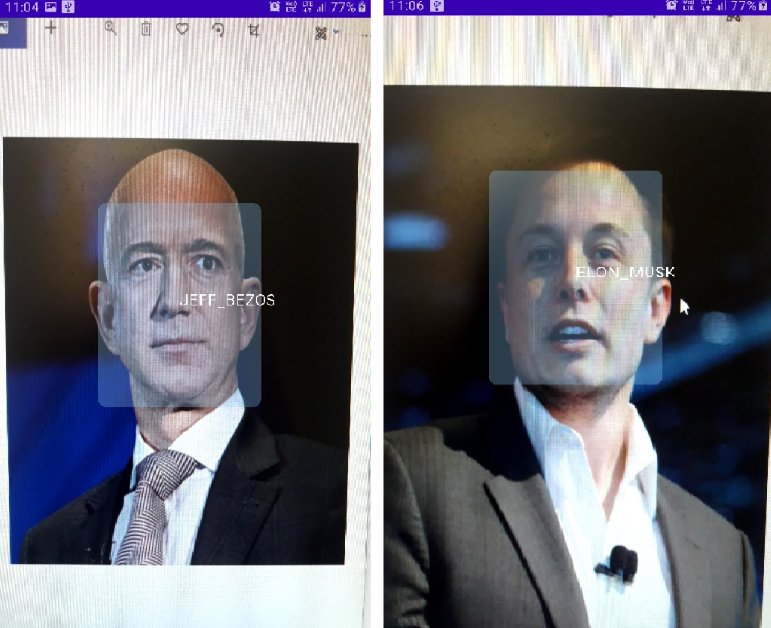

FaceRecognition_With_FaceNet_Android

Store images of people who you would like to recognize and the app, using these images, will classify those people. We don't need to modify the app/retrain any ML model to add more people ( subjects ) for classification

If you're ML developer, you might have heard about FaceNet, Google's state-of-the-art model for generating face embeddings. In this

project, we'll use the FaceNet model on Android and generate embeddings ( fixed size vectors ) which hold information of the face.

The accuracy of the face detection system ( with FaceNet ) may not have a considerable accuracy. As, under the hood, we are comparing angles between vectors, this model would not produce results with a very high accuracy.

About FaceNet

So, the aim of the FaceNet model is to generate a 128 dimensional vector of a given face. It takes in an 160 * 160 RGB image and

outputs an array with 128 elements. How is it going to help us in our face recognition project?

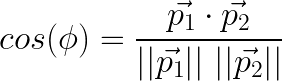

Well, the FaceNet model generates similar face vectors for similar faces. Here, my the term "similar", we mean

the vectors which point out in the same direction. We calculate this similarity by measuring cosine of the angle between the two

given 128 dimensional vectors.

In this app, we'll generate two such vectors and find the similarity between them. The one which has the highest similarity is our

desired output.

You can download the FaceNet Keras .h5 file from this repo.

Usage

So, an user can store images in his/her device in a specific folder. If, for instance, the user wants the app to recognize

two people namely "Rahul" and "Neeta". So the user needs to store the images by creating two directories namely "Rahul" and "Neeta"

and store their images inside of these directories.

images ->

Rahul ->

image_rahul.png

Neeta ->

image_neeta.png

The app will then process these images and classify these people thereafter. For face recognition, Firebase MLKit is used which

fetches bounding boxes for all the faces present in the camera frame.

This is different from existing face recognition apps as the user does not programme the app in such to recognize only a

fixed number of persons. If a new person is to be recognized, the system ( app ) has to be modified to include the new person as

well.

Working

The app's working is described in the steps below. The corresponding code is present in the file written in brackets.

-

Scan the

imagesfolder present in the internal storage. Next, parse all the images present withinimagesfolder and store

the names of sub directories withinimages. For every image, collect bounding box coordinates ( as aRect) using Firebase ML

Kit. Crop the face from the image ( the one which was collected from user's storage ) using the bounding box coordinates. -

Finally, we have a list of cropped

Bitmapof the faces present in the images. Next, feed the croppedBitmapto the FaceNet

model and get the embeddings ( asFloatArray). Now, we create aHashMap<String,FloatArray>object where we store the names of

the sub directories as keys and the embeddings as their corresponding values.

The above procedure is carried out only on the app's startup. The steps below will execute on each camera frame.

- Using

androidx.camera.core.ImageAnalysis, we construct aFrameAnalyserclass which processes the camera frames. Now, for a

given frame, we first get the bounding box coordinates ( as aRect) of all the faces present in the frame. Crop the face from

the frame using these boxes. - Feed the cropped faces to the FaceNet model to generate embeddings for them. Using cosine similarity, calculate the similarity

scores for all subjects ( which we stored earlier asHashMap<String,FloatArray>). The one with highest similarity is

determined. The final output is then stored as aPredictionand passed to theBoundingBoxOverlaywhich draws boxes and

text.